U.S. regulation of artificial intelligence (AI) in medical devices will involve cooperative work among multiple departments within the FDA. On March 15, the FDA released “Artificial Intelligence and Medical Products: How CBER, CDER, CDRH, and OCP are Working Together,” which outlines how the agency’s medical product centers plan to address the efforts required to protect public health while fostering responsible innovation in AI used in medical products and their development.

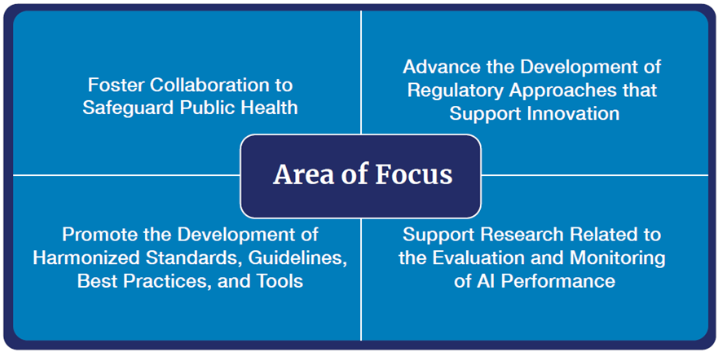

The paper outlines four priorities for cross-center collaboration to foster consistency across the FDA in regulating the development, deployment, use, and maintenance of AI technologies throughout the medical product life cycle.

They include:

Foster Collaboration to Safeguard Public Health

- Solicit input from a range of interested parties to consider critical aspects of AI use in medical products, such as transparency, explainability, governance, bias, cybersecurity, and quality assurance.

- Promote the development of educational initiatives to support regulatory bodies, health care professionals, patients, researchers, and industry as they navigate the safe and responsible use of AI in medical product development and in medical products.

- Continue to work closely with global collaborators to promote international cooperation on standards, guidelines, and best practices to encourage consistency and convergence in the use and evaluation of AI across the medical product landscape.

Advance the Development of Regulatory Approaches That Support Innovation

- Continuing to monitor and evaluate trends and emerging issues to detect potential knowledge gaps and opportunities, including in regulatory submissions, allowing for timely adaptations that provide clarity for the use of AI in the medical product life cycle.

- Supporting regulatory science efforts to develop methodology for evaluating AI algorithms, identifying and mitigating bias, and ensuring the robustness and resilience of AI algorithms to withstand changing clinical inputs and conditions.

- Leveraging and continuing to build upon existing initiatives for the evaluation and regulation of AI use in medical products and in medical product development, including in manufacturing.

- Issuing guidance regarding the use of AI in medical product development and in medical products, including: final guidance on marketing submission recommendations for predetermined change control plans for AI-enabled device software functions; draft guidance on life cycle management considerations and premarket submission recommendations for AI-enabled device software functions; and draft guidance on considerations for the use of AI to support regulatory decision-making for drugs and biological products.

Promote the Development of Standards, Guidelines, Best Practices, and Tools for the Medical Product Life Cycle

- Continue to refine and develop considerations for evaluating the safe, responsible, and ethical use of AI in the medical product life cycle (e.g., provides adequate transparency and addresses safety and cybersecurity concerns).

- Identify and promote best practices for long-term safety and real-world performance monitoring of AI-enabled medical products.

- Explore best practices for documenting and ensuring that data used to train and test AI models are fit for use, including adequately representing the target population.

- Develop a framework and strategy for quality assurance of AI-enabled tools or systems used in the medical product life cycle, emphasizing continued monitoring and mitigation of risks.

Support Research Related to the Evaluation and Monitoring of AI Performance

- Identify projects that highlight different points where bias can be introduced in the AI development life cycle and how it can be addressed, including through risk management.

- Support projects that consider health inequities associated with the use of AI in medical product development to promote equity and ensure data representativeness, leveraging ongoing diversity, equity, and inclusion efforts.

- Support the ongoing monitoring of AI tools in medical product development within demonstration projects to ensure adherence to standards and maintain performance and reliability throughout their life cycle.